Artificial intelligence in photography is no longer science fiction

Artificial intelligence. It was the word of the year 2022, although in reality there are two, according to the FundéuRAE (Fundación del Español Urgente). And there is no shortage of reasons because, over the past year, AI has ceased to be just another of those words that come in one ear and end up going out the other with no further consequences, and has become the name of something sufficiently relevant to start to stick in our heads. Artificial intelligence in photography is no longer science fiction.

Let’s say that something similar has happened with AI to what happened with another syntagma, climate change, two words that have also been part of our vocabulary for decades, which, despite the warnings, we interpreted as something alien to our lives until, in the midst of the energy crisis, the hottest summer in history arrives, precisely the summer of 2022, and more than one begins to become aware of its meaning and the consequences of ignoring it. Welcome, then, even if it is already a little late…

Origami Sneakers. Nat Gutiérrez 2022. Midjourney+Photoshop+Topaz Gigapixel AI.

Contenido

Artificial intelligence in photography

Until just over a year ago, talking about artificial intelligence was, for the vast majority of mortals, science fiction. This was certainly not the case for companies such as OpenAI developers of DALL-E or ChatGPT who, I’m sure, have known what they are up to for a very long time. It was precisely DALL-E, specifically the DALL-E 2 version, which first sparked off the hype back in April last year with that famous image of the astronaut riding a horse, generated solely by artificial intelligence.

And it is not that this was the first image created by an AI, but rather that it was the one that caught more than one person off guard. Until then, the generation of images by artificial intelligence left something to be desired, with results halfway between abstraction and the most bizarre surrealism.

That realistic image of an astronaut riding through space on the back of a white horse was the starting point of a dizzying evolution of AI (less than a year) as a generator of visual works that would reach its peak of controversy in September 2022, when an artwork created by artificial intelligence won first prize in a fine art competition at the Colorado State Fair (USA), causing sparks to fly among illustrators and graphic artists.

But let’s take it one step at a time.

Until just over a year ago, talking about artificial intelligence was, for the vast majority of mortals, science fiction.

A photo of an astronaut riding a horse. Image created with DALL-E 2.

Let’s get into (my) background

I discovered photography at a very young age, when I was only 15, the same year that the Berlin Wall fell, so it is clear that the person writing these lines either already has grey hair or no longer needs a comb. And I put that date as a starting point because, although I had already shot some photos with my father’s Werlisa compact, it was at the end of 1989 when, after shooting and developing my first “creative” photos taken with a reflex camera, a borrowed Olympus OM-1, I was captivated by the technique and the process and by the results, which were obviously still very amateurish, obviously still very amateurish, but sufficiently enigmatic to provoke in me a certain obsession that soon became the confirmation of my vocation for the image, at first for photography, but as time went by, for other audiovisual disciplines.

I discovered photography at a very young age, when I was only 15, the same year that the Berlin Wall fell, so it is clear that whoever is writing these lines is either already combing grey hair or no longer needs a comb.

My first 10 years as a photographer were exclusively analogue, both in the capture and in the chemical development and printing, and with the absolute predominance of black and white photography, until the end of the 90’s when I acquired my first computer equipment, after which the analogue gave way to digital processes, opening the door to other creative disciplines such as design, video and the creation of web communication projects, allowing me to make a living as a multidisciplinary professional and create my own studio with which I have been developing all kinds of communication, image and advertising projects for 15 years.

From 1991 to 2022 in 2 images.

I think it’s clear that a journey like this one, fairly summarised, is not possible without the acceptance and inclusion of technological advances when it comes to developing a professional and creative career in the audiovisual sector. A process that, I assure you, has not been easy, and that has often involved having to face certain personal “certainties” and the vulnerability that one feels every time one has to leave that comfort zone that one thinks one knows and controls, to have to learn again from another perspective. Fortunately, in my case, curiosity has always been an ally, and in accepting that there is nothing wrong with being more of a learner than a teacher, uncertainties and controversies end up giving way to the excitement of being able to put into practice, both creatively and professionally, what I have discovered in each new process of learning and research.

My first contact with an AI

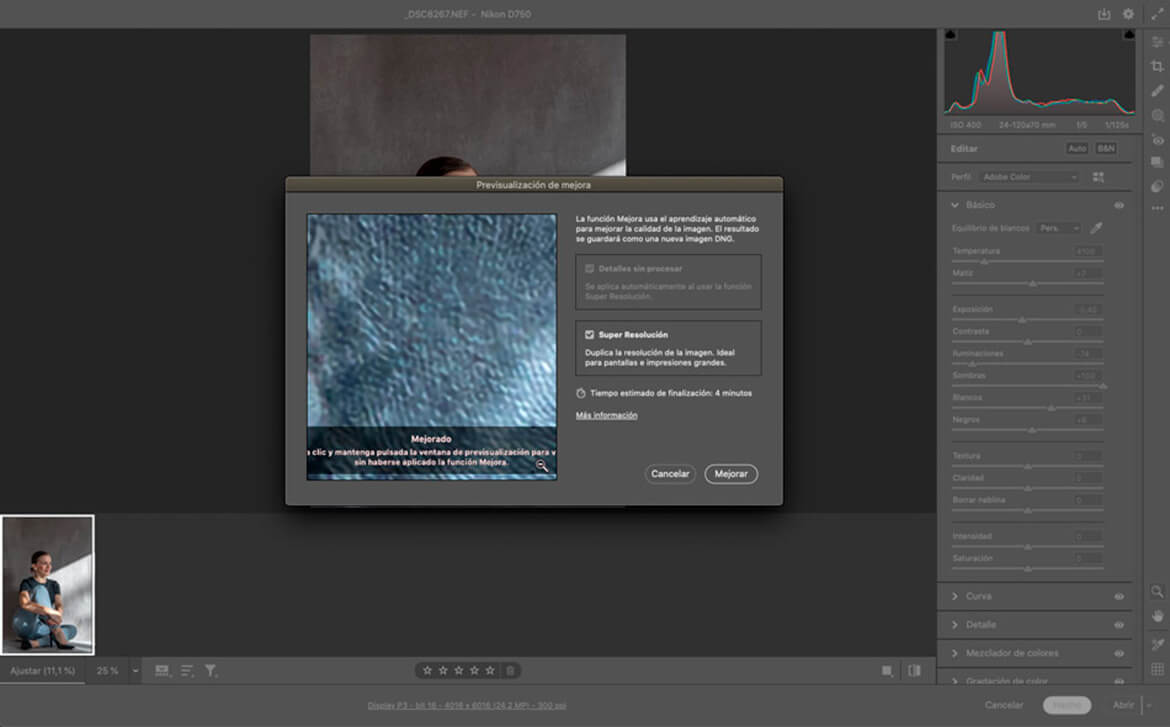

At the beginning of 2021, Adobe surprised everyone with an update to Adobe Camera Raw that included a new tool called Super Resolution that left more than one, including me, with their mouths open, and meant a blow on the table by Adobe in the eternal debate about the resolution and size of the sensor to take into account when buying a camera. Suddenly, the resolution of a RAW file would no longer depend exclusively on the technology of the camera, but directly on the capacity of a software that could increase up to 4 times the resolution of the original image, with results that, although at the beginning showed certain defects that had to be polished (nothing that could not be solved with other Camera Raw and Photoshop tools), over time have been improving to the point of putting in check the need for a Full Frame well loaded with megapixels.

Well, Super Resolution was made possible by an AI called Enhance Details, created just a couple of years before its inclusion in Adobe Camera Raw and trained on millions of images so that it could understand the interpolation patterns of an image and correct with algorithms the main errors of pixelation and colour banding when interpolating an image, that is, filling the missing information in the original with other ‘invented’ information in order to achieve a larger size than the original. It is not that interpolation is something new for those of us who work digitally with images, but it is true that the results, especially when the difference between the size of the original file and the output file is considerable, more than once left something to be desired, no matter how much post-production one put into the attempt. But, suddenly, an AI came along to solve one of the great headaches of a photographer or digital creative and, on top of that, in an automated and fast way, with results that, as the AI continues to train itself, get closer and closer to the perfection of an original captured with a very high resolution sensor.

Super Resolution was made possible by an AI called Enhance Details, created just a couple of years before its inclusion in Adobe Camera Raw and trained on millions of images so that it could understand the interpolation patterns of an image and correct with algorithms the main errors of pixelation and colour banding when interpolating an image …

Adobe Camera Raw Super Resolution Tool.

And from striking the blow to breaking the table

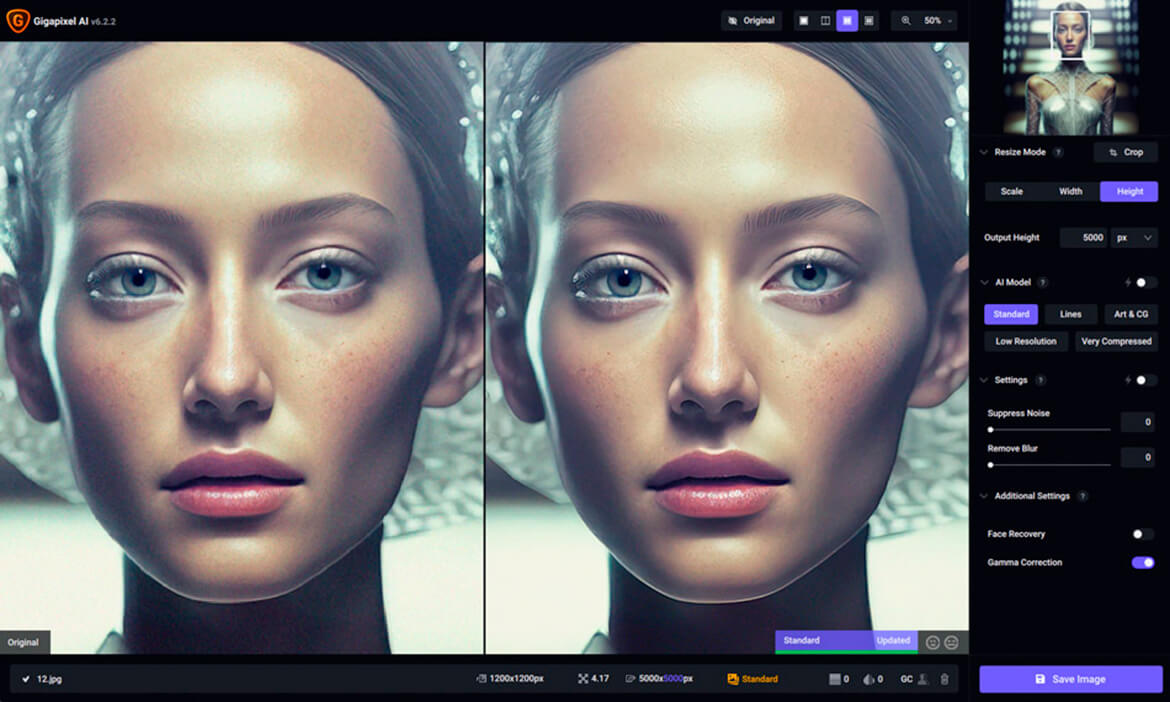

But was Adobe the pioneer when it came to including AI in its software processes? Not really, because at the end of 2019, an old acquaintance of external plugins that can be integrated into Photoshop and Lightroom, Topaz Labs, surprised everyone with the Sharpen AI tool, capable of improving the sharpness of an image by applying a focus mask, correcting the focus and even stabilising a shaky image, all with AI.

My first encounter with the AI technology developed by Topaz Labs was a bit later and it came about due to a technical need, that of having to use graphic material from a client for his web communication project, much of which was at such a low resolution that it didn’t even reach the minimum acceptable for a correct display on screen. So, after trying to improve the available material with Photoshop with rather poor results, I started looking for options with other software until I came across Topaz Gigapixel AI, and already from the first test, enlarging an image of only 500 pixels wide to the optimum 1920 pixels for a full screen web header, I had enough reasons to buy a pack of Topaz Gigapixel AI, I had enough reasons to buy a complete pack of image enhancement software that also included the latest version of Topaz Sharpen AI and, also, Topaz DeNoise AI, with which you can achieve real miracles when it comes to reducing the noise of an image, provided that it has been originally obtained by digital means, since, in scans from chemical film with a lot of grain, the thing just does not work, at least, for now.

Topaz Gigapixel AI

The difference between Adobe’s Super Resolution tool and the Topaz Labs software is that, while the former only allows you to work from an original camera RAW file, in the case of Topaz Labs you can start from virtually any type of image file, whether compressed formats such as JPG or PNG, or final art formats such as TIF, so we can not only enlarge and enhance files at low resolution, but we can also apply to any final art a substantial improvement in resolution and sharpness to allow us larger output sizes for photographic or printing prints. And it is at this point, where I have been able to make the most of the Topaz Labs software, which has allowed me to increase the resolution of some personal photographic projects, as is the case of the Minimum Squares images, shot over the years with cameras of different sensors, and developed and edited, at the time, without applying any type of interpolation, and whose original resolutions did not allow, especially with the older files, prints on photographic paper larger than 25×25 cm without the quality suffering.

Therefore, I am not exaggerating when I say that, thanks to artificial intelligence, I have been able to remaster a large part of the final artwork of my photographic projects without having to start from scratch with the original capture files, thus maintaining the digital post-production finish of each image, and obtaining output formats for printing on paper at considerable sizes, from much lower resolutions, not only without losing the sharpness and detail of the original image, but also improving these and other parameters and giving a chance to my photographs and designs as new material in optimal conditions for exhibition projects.

Therefore, I am not exaggerating when I say that, thanks to artificial intelligence, I have been able to remaster a large part of the final artwork of my photographic projects without having to start from scratch with the original capture files, thus maintaining the digital post-production finish of each image?

And then Midjourney arrives and the creative world is rocked

Until the summer of last year, my experience with AI didn’t go beyond using it as a powerful tool to technically improve my digital work processes, until Midjourney burst into my life and a new avenue of research opened up for me that goes beyond technical issues (which it does, of course) and suddenly represents a real revulsive in terms of my conception of what a creative process was until now. The question was no longer to use AI to improve my images, but to explore AI as an image generator, something that DALL-E was already doing, as I told you at the beginning of this article, as well as other generative AI projects such as Stable Diffusion but which, with Midjourney has taken on a dimension that, bearing in mind that we are still at the dawn of this technology, if it continues to evolve at this speed, it would not be an exaggeration to say that we would be facing a major paradigm shift in the relationship between humans and technology.

Project This person doesn’t exist. Nat Gutierrez 2022. Midjourney+Photoshop+Topaz Gigapixel AI.

The way Midjourney works is not very different from other generative AIs. It involves entering a text description, which we call prompt, through a computer programme (bot), in this case in the form of an online chat (it cannot be downloaded as an app), a format that is also used by another AI that is turning the world upside down (Chat GPT). To access the tool, which is still in beta version, one has to register on Midjourney’s official channel on Discord and once the invitation has been accepted, you will be able to access the so-called Newbies channels, which are the only ones in which new users can operate. Once you have chosen a channel, the first thing that stands out is that all the content created by other users is public, to the point that you can not only create your own content from scratch, but also borrow material generated by others, assuming, of course, that this right is reciprocal.

With the free version you can have a few Fast Hours, which is, after all, what you are entitled to once you have subscribed: time to generate images quickly. Once this trial period is over, to get more time, and as if it were the film In Time, you have to dig deep into your pocket and buy one of the 3 paid subscription plans that the system offers, ranging from 10 dollars a month to 60 dollars a month, the latter including the option of working in private mode.

Midjourney

The secret to obtaining a good result through Midjourney lies in the way in which we create the descriptive texts (prompts), which, for now, work well if they are in English, although as the AI grows, it will surely be possible to work with it without any problem in any language. These prompts not only have to include descriptive text, but we can also add some characters as commands in which we can specify visual issues such as appearance, resolution, or the type of finish we want, whether more realistic or figurative, as in a photograph or an illustration. And from there, to try, try and try until you get the images you want, as long as you have some criteria and you are not so easily seduced by the spectacularity of the results and the speed with which they are obtained.

In my case, reaching results that convince me has not been something from yesterday to today, although I recognise that the learning process, in which I am still involved, is astonishingly fast. On the other hand, it must also be taken into account that AI still has its limitations and that certain aspects of the images that I have generated through Midjourney have had to go through other processes, such as Photoshop to solve problems when generating a face or a human figure and, lo and behold, I have also had to use Topaz AI to increase the sharpness of the image and the resolution of the final file, since the images generated by Midjourney, for now, do not exceed 1,792 x 1,024 pixels.

Origami Woman. Nat Gutiérrez 2022. Midjourney+Photoshop+Topaz Gigapixel AI.

So what’s the problem?

This is where it is time to open the melon, or Pandora’s box as the doomsayers say.

We are faced with a technology that creates content in seconds, in this case visual, from a mere textual description, more or less complex, with which the whole process of capturing or creating images, as we have known until now (photographing, drawing, designing…) disappears and all we have to do is direct and educate this artificial intelligence so that it is the one that executes what we have in mind. To try to explain it in a simpler way, it’s like anyone can now assume the role of art director or creative director, as already exists in fields such as advertising or film, but with the difference that instead of having a complete team of professionals at your service (within the production possibilities that each one has), now it is a single technology that is capable of replacing a large part of the audiovisual production processes and generating a creative work with a surprising quality of execution and in record time.

This creates a first dilemma about the authorship of these images: who is the creator of the work? The machine or who is directing the machine? And it is here that one has to reach into one’s own mental archive to try to find equivalents, while keeping the distance, between the current experience with AI and previous experiences without AI.

This creates a first dilemma about the authorship of such images: who is the creator of the work? The machine or who is directing the machine?

In my case, my introduction into the world of graphic design at the end of the 90s came as a result of the acquisition of my first computer to work with images, which led me to be a layout artist for some graphic designers who, after years of creating content in a traditional way, such as collages, did not know how to adapt at the time to the unstoppable irruption of new technologies. My work for them consisted of generating the graphic contents through programmes such as Freehand, Corel Draw or Adobe Illustrator and layout the works following the designer’s instructions, who simply sat next to me and told me how to arrange each element, colour, shape, etc., on the screen until I reached a satisfactory final result… Having said that, who do you think signed as the author of the final works or designs, the designer or the layout artist?

I do not attempt to provide answers because I find myself having to search for them myself as I go through this research process, but I do believe that, in the case of the authorship of an image generated by artificial intelligence, the question is only the tip of the iceberg of what really lies beneath the surface. It is not so much a dilemma about authorship, but rather the unease that is undoubtedly produced by the thought of a single machine being able to reproduce the processes for which, until now, it was necessary to have a human team, to a greater or lesser extent, to be able to execute them according to the directives of a director. So, what are we talking about here: authorship or professional roles and, consequently, jobs that could be wiped out with the irruption of this technology?

Rebirth. Nat Gutierrez 2022. Midjourney+Photoshop+Topaz Gigapixel AI.

On the other hand, there is the legal issue concerning the functioning of AIs and how they feed when it comes to generating content, and here, as with the algorithms created by the big tech companies, things are more opaque. Because we cannot ignore the fact that generative AIs, when creating content, feed off and learn from all the audiovisual material uploaded on the Internet, whether it is subject to copyright or intellectual property rights or not. But of course, if we look at how an artificial intelligence programme such as Midjourney, Dall-E or Stable Difussion works, as far as we can tell, what these applications do is not very different from what a barman might do when he puts a bunch of original ingredients into a cocktail shaker and ‘shakes’ them at a certain speed so that they mix together to create a concoction that, regardless of whether or not it can be used to create a new content, can be used to create a new kind of content, regardless of the fact that the flavours and aromas of the raw materials that compose it can be sensed in it, at the end of the process it is still a drink with its own entity, something that, transferred to the legality of the intellectual property of the images, is already contemplated when talking about original work and derivative work, not without certain controversies.

Because we cannot ignore the fact that generative AIs, when creating content, feed on and learn from all the audiovisual material uploaded on the Internet, whether or not it is subject to copyright or intellectual property rights.

What if one day it is the technologies behind the AIs themselves that claim in their terms of use the intellectual property of the generated images? For the time being, Midjourney, Dall-E and Stable Difussion do not currently include this in their terms of use. Moreover, according to these terms, the supposed copyright of the content generated through their artificial intelligence programmes is, for the time being, the property of who (not the ‘what’) generates that content. But this has not prevented the first lawsuits from arising in the US against these technologies by illustrators and artistic creators for copyright infringement, something that for now it is difficult to know how far it will go at a time when world legislation, surely also caught on the wrong foot, will have to evolve to accommodate what is yet to come with respect to the use of AI, and not only in what affects the creative audiovisual sector.

Sugarboom. Nat Gutierrez 2022. Midjourney+Photoshop+Topaz Gigapixel AI.

Conclusions?

Well, now, for my part, there are few. More questions than answers that will have to be resolved in the short and medium term, because what is clear to me is that artificial intelligence, in a relatively short period of time (less than 5 years) is going to be an indissoluble part of our existence, whether technological or not, in the same way that, at the time, happened with the irruption of the Internet and, subsequently, the emergence of social networks and the indisputable impact that all this has had on humanity, both for good and for bad.

As I mentioned at the beginning of this post, I have never closed myself to technological advances. Without them, I wouldn’t be able to do anything I do today, both professionally and creatively. But this doesn’t mean that I welcome every advance with open arms, as if it were nothing.

I have never thought that technology is a problem. Quite the contrary. No one can deny that technological advances have made a major contribution to improving our lives and, in the case of artificial intelligence, I have no doubt that it will bring about a major leap forward in this respect. After all, the automation of processes should help creators to spend their time creating and to develop their creative projects in a feasible, accessible and agile way.

In the end, for me, it is a matter of trying things out, investigating, and assessing with my own experience both the benefits and the problems that any new technology may bring, trying to strike a balance between personal convictions and acceptance of the inevitable.

But the problem is that any major technological advance entails a series of consequences that are not always positive. It is the toll that progress makes us pay (nothing is free) for being able to enjoy (or not) these advances and which, possibly, has more to do with the uses that human beings make of technology, on a smaller or larger scale and a paradox that is difficult to understand in the midst of the era of free and open access to information and knowledge via the Internet: The fact that the pace and speed at which technology advances is often not reflected in the pace and speed at which the thinking heads of too many people advance, and one cannot help but wonder whether human beings, still so primitive in so many aspects, are really prepared to understand and manage all this technological potential with common sense and a critical eye.

In the end, for me, it is a matter of trying things out, investigating, and assessing with my own experience both the benefits and the problems that any new technology may bring, trying to strike a balance between personal convictions and acceptance of the inevitable. And artificial intelligence is already inevitable. It is another train at high speed (very high in this case), on which one has to decide whether to jump on, let it pass or avoid being run over.

And I’ve been on a few of these ‘trains’ in my life.

Will it continue?